OpenAI announces OpenWeight AI inference model 'gpt-oss', lightweight version can run on laptops and smartphones

On August 5, 2025, OpenAI announced the release of gpt-oss , a free open weight model that can run on a laptop. This model is OpenAI's first open weight language model since

Introducing gpt-oss | OpenAI

https://openai.com/ja-JP/index/introducing-gpt-oss/

openai/gpt-oss-120b · Hugging Face

https://huggingface.co/openai/gpt-oss-120b

openai/gpt-oss-20b · Hugging Face

https://huggingface.co/openai/gpt-oss-20b

OpenAI releases a free GPT model that can run on your laptop | The Verge

https://www.theverge.com/openai/718785/openai-gpt-oss-open-model-release

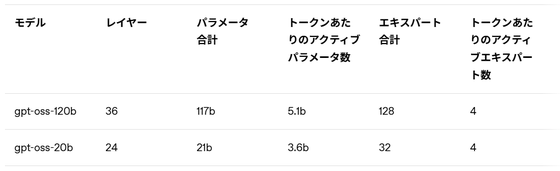

gpt-oss is available in two models: 'gpt-oss-120b' with 120 billion parameters and 'gpt-oss-20b' with 20 billion parameters. The 120b model runs on a single NVIDIA GPU (80GB) and is said to perform comparable to OpenAI's closed model, o4-mini. Meanwhile, the 20b model is said to perform equivalently to the o3-mini. Furthermore, the 20b model can run on just 16GB of memory, making it suitable for use on high-end laptops and mobile devices such as smartphones.

gpt-oss uses a

It also natively supports long context lengths up to 128k, and the tokenizer 'o200k_harmony' used in 'OpenAI o4-mini' and 'GPT-4o' has also been open-sourced.

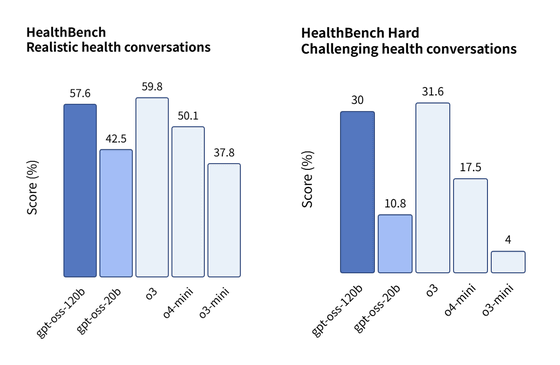

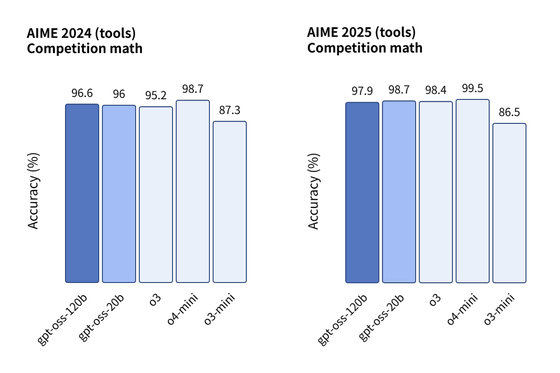

OpenAI reports that gpt-oss-120b has demonstrated capabilities comparable to or even surpassing the existing closed model o4-mini, particularly in specialized domains such as health and mathematics.

In HealthBench, a benchmark that measures health-related question-answering capabilities, gpt-oss-120b achieved a score of 57.6% in an evaluation that simulated a 'realistic health conversation,' beating the o4-mini's score of approximately 50%. Furthermore, in HealthBench Hard, which evaluates a more difficult 'challenging health conversation,' gpt-oss-120b achieved a score of 30%, significantly outperforming the o4-mini's 17.5%.

Additionally, in the

OpenAI claims that gpt-oss is capable of a wide range of tasks, from complex inference tasks, web search, coding, and even operating agents. It's also compatible with OpenAI's Responses API, allowing developers to balance latency and performance by adjusting a setting called 'reasoning_effort.'

A unique feature of this model is that it visualizes the chain of thought (CoT) that the model goes through to arrive at an answer. This makes it easier to monitor the model for malfunctions or misuse. OpenAI does not directly oversee this CoT, but instead wants to provide an opportunity for developers and researchers to research and implement their own monitoring systems.

OpenAI also emphasizes that gpt-oss is its most rigorously tested model to date. It has undergone external evaluation by specialist firms for risks in areas such as cybersecurity and biological warfare, and harmful information related to chemical, biological, radiological, and nuclear (CBRN) threats has been removed from the pre-training data. Furthermore, post-training uses deliberate alignment and instruction hierarchy to teach the model to reject unsafe prompts and prevent prompt injection.

Furthermore, OpenAI is testing its resistance to malicious users' attempts to fine-tune the model for dangerous purposes using specialized datasets. As part of its contribution to building a safe ecosystem, OpenAI also announced a Red Teaming Challenge with a total prize pool of $500,000 (approximately 75 million yen) aimed at identifying new security issues.

gpt-oss is released under the Apache 2.0 license, which allows for widespread commercial modifications. Anyone can download it for free through major platforms such as Hugging Face, Databricks , Azure, and AWS, and Microsoft has announced that it will provide a version optimized for Windows devices through ONNX Runtime. gpt-oss also partners with hardware manufacturers such as NVIDIA, AMD, and Groq, as well as many other platforms such as vLLM and Ollama, to support use in a wide range of environments.

CEO Sam Altman had previously expressed reservations about releasing an open-weight model due to safety concerns, but demand for an open model has grown among developers seeking low cost and high customizability. Co-founder Greg Brockman expressed his excitement about this release, saying, 'Lowering the barriers to access accelerates innovation. Giving developers and companies the opportunity to hack it will lead to amazing things.'

in Software, Posted by log1i_yk